using generative neural nets in keras to create ‘on-the-fly’ dialogue

graham a

This is an old walkthrough of using Keras to train a character-level LSTM on YouTube captions, then using speech-to-text to generate a possible next line of dialogue. The code is no longer current, but it shows the basic flow I was playing with: collect sales-call subtitles, train a small generative model, speak a prompt, and have the model suggest what could come next.

Updates

6/6/23 Someone recently asked a question about this post. The original code here is no longer functional, and it was written when I had very little programming experience. I’m keeping it up for historical reference.

4/10/17 Most python modules have changed significantly since this was written, causing issues with youtube-dl and Keras. If you are working on an updated version or already have one, please get in touch.

Introduction

There have been a few cool things done with generative neural nets, but when I wrote this, I did not know of many publicly discussed business applications. This is by no means the best use or the most interesting one, but I still think it is an interesting starting point for using generative models as training or augmentation tools.

There’s a lot of potential for this and similar tools, and I’d love to work on or collaborate with others on something like this. If you are interested, contact me at the email listed at the bottom of this post.

Start

My initial plan started with the idea that subtitles on YouTube videos could make a good database of conversational dialogue. They are not perfect, but they do seem to capture some of the inflections and peculiarities of human speech that written text does not always capture, along with a variety of ways that conversations flow.

The training set to create this model is just a collection of YouTube videos that deal with sales or call-oriented dialogue. For instance, here are a couple of videos with subtitles:

The only real reasons I used these videos were that they are long, may contain phone dialogue, and already have subtitles or closed captions. I tried to find videos where the captions seemed somewhat accurate, but there are obvious errors, and their effect on the training set is noticeable.

From here, all that is necessary is to create a fairly large corpus. Around 500,000 characters is the minimum I would aim for.

Using a python script with youtube-dl and pysrt to grab the subtitles or closed captions allows a quick pipeline to grab subtitles from a lot of videos.

import youtube_dl, pysrt

import numpy as np

class audio_source(object):

def __init__(self, url):

self.url = url

self.ydl_opts = {

'subtitles': 'en',

'writesubtitles': True,

'writeautomaticsub': True}

self.subtitlesavailable = self.are_subs_available()

if self.subtitlesavailable:

self.grab_auto_subs()

def are_subs_available(self):

with youtube_dl.YoutubeDL(self.ydl_opts) as ydl:

subs = ydl.extract_info(self.url, download=False)

if subs['requested_subtitles']:

self.title = subs['title']

self.subs_url = subs['requested_subtitles']['en']['url']

return True

else:

return False

def grab_auto_subs(self):

"""

grab's subs or cc depending on whats available,

think it grabs both if subtitles are available

issue with ydl_opts but doesn't bother me

"""

try:

urllib.request.urlretrieve(

self.subs_url, 'youtube-dl-texts/' + self.title + '.srt')

print("subtitles saved directly from youtube\n")

text = pysrt.open('youtube-dl-texts/' + self.title + '.srt')

self.text = text.text.replace('\n', ' ')

except IOError:

print("\n *** saving sub's didn't work *** \n")

with open('other/url_list','r') as datafile:

url_list = datafile.read().splitlines()

total_text = []

for u in url_list:

try:

total_text.append(audio_source(url=u).text)

except AttributeError:

pass

total_text = ' '.join(total_text).lower()

print(len(total_text))

>>>

Training the generative neural net

At this point, you have a mass of text that, if you were to actually read it, would look quite incoherent and useless. I am also not creating a separation between texts like many others have, which would probably be useful for determining when a conversation should be ended, etc. There is hopefully enough data to get a usable result for the time being, and the errors will “regress to the mean”.

Here’s an example of some of the last 260 chars of the dialogue I have from slightly less than 1 MB worth of text from videos:

print(total_text[-260:])

>>>'more information about those meetings and travel make sure to fax it to this number at the bottom and are you into the grand prize drawing weeks stay at intercontinental resort Tahiti be sure to fax in that form you all right thank you feel you have a great day'

To train the model, we first need to do a bit of preprocessing since the generative neural net uses sequential data character by character. Technically it uses fixed-length steps, but it still treats each step character by character. A fair amount of this preprocessing is from the Keras LSTM generating example.

chars = set(total_text)

char_indices = dict((c, i) for i, c in enumerate(chars))

indices_char = dict((i, c) for i, c in enumerate(chars))

maxlen = 20

step = 1

sentences = []

next_chars = []

for i in range(0, len(total_text) - maxlen, step):

sentences.append(total_text[i: i + maxlen])

next_chars.append(total_text[i + maxlen])

print('nb sequences:', len(sentences))

X = np.zeros((len(sentences), maxlen, len(chars)), dtype=np.bool)

y = np.zeros((len(sentences), len(chars)), dtype=np.bool)

for i, sentence in enumerate(sentences):

for t, char in enumerate(sentence):

X[i, t, char_indices[char]] = 1

y[i, char_indices[next_chars[i]]] = 1

LSTM training

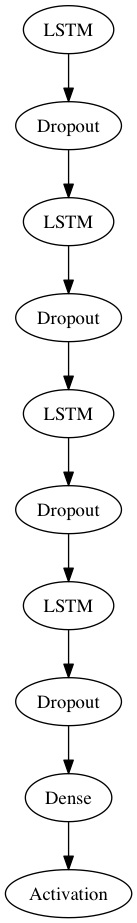

For the NN library, I am using Keras for a few reasons, but it is so far my favorite python NN library because of how modular and easy to understand it is (and the creator and contributors seem incredibly smart). Quick prototyping and experimentation helps. For my example, an LSTM-based RNN architecture was the most effective way I found to generate useful results.

One of the better models I found:

from keras.models import Sequential

from keras.layers.core import Dense, Activation, Dropout

from keras.layers.recurrent import LSTM

model = Sequential()

model.add(LSTM(len(chars), 512, return_sequences=True))

model.add(Dropout(0.20))

# use 20% dropout on all LSTM layers: http://arxiv.org/abs/1312.4569

model.add(LSTM(512, 512, return_sequences=True))

model.add(Dropout(0.20))

model.add(LSTM(512, 256, return_sequences=True))

model.add(Dropout(0.20))

model.add(LSTM(256, 256, return_sequences=False))

model.add(Dropout(0.20))

model.add(Dense(256, len(chars)))

model.add(Activation('softmax'))

# compile or load weights then compile depending

model.compile(loss='categorical_crossentropy', optimizer='rmsprop')

model.fit(X,y,nb_epoch=50)

>>> Epoch 0

>>> 7744/285648 [>.............................] - ETA: 4717s - loss: 3.0232

If you would like to see a rudimentary visualization of the architecture:

This will probably take quite a while to train, but GPU-based training dramatically speeds up the .fit() process. Loss is hard to say for certain, but a minimum that levels around .5 is ideal.

At this point, a model is trained and we are ready to generate some recommended dialogue.

Generate some text

The final part of this is speaking something to your computer (or potentially, having the computer listen to what you or someone else is saying in some app or extension), turning that speech into text, and using it to generate a suggestion for what should follow.

There are a few ways to do this, but the easiest is to get an API key for Google speech-to-text and install the libraries needed to use the python speech recognition module.

Use a personal key in the Recognizer to avoid abusing the built-in API token. You need to subscribe to a mailing list and then enable the API, but it takes about 2 minutes.

You can incorporate whatever was spoken into the model as well, but that’s for a later date. Right now, all I will do is set it up so you speak to it for a moment and then it generates some text and prints that out.

import speech_recognition as sr

recognizer = sr.Recognizer(key=myKey)

def speech2text(r=recognizer):

# speak to microphone, use google api, return text

input('press enter then speak: \n'+'------'*5)

with sr.Microphone() as source:

audio = r.listen(source)

try:

print('\nprocessing...\n')

return r.recognize(audio).lower()

except LookupError:

pass

def gentext():

seed_text = speech2text()

generated = '' + seed_text

print('------'*5+'\nyou said: \n'+'"' + seed_text +'"')

print('------'*5+'\n generating...\n'+ '------'*5)

for iteration in range(50):

# create x vector from seed to predict off of

x = np.zeros((1, len(seed_text), len(chars)))

for t, char in enumerate(seed_text):

x[0, t, char_indices[char]] = 1.

preds = model.predict(x, verbose=0)[0]

next_index = np.argmax(preds)

next_char = indices_char[next_index]

generated += next_char

seed_text = seed_text[1:] + next_char

print('\n\nfollow up with: ' + generated)

Here’s one of the better single examples I encountered with this model after fitting:

press enter then speak:

------------------------------

processing...

------------------------------

you said:

"i would like to talk to you about a house i saw that you had for sale"

------------------------------

generating...

------------------------------

follow up with:

i would like to talk to you about a house i saw that you had for sale tell me what was its price though and i can reall

Performance note

In terms of training the model, training/predicting with a GPU vs. CPU is about 3-4x faster on my 2013 MacBook Pro.

Conclusion

With something like this, it’s very easy to see how you could splice in audio from a phone call or text chat and have this carry over pretty well. Given the right datasets, there are tons of potential uses. You could also stack and blend models together to provide different dialogue models and help differentiate people within dialogue.

If you are interested in hearing more about this type of stuff, feel free to reach out.